Nvidia is reportedly poised to launch a next-generation inference processor engineered to turbocharge AI performance for OpenAI and other enterprise clients, according to sources cited by The Wall Street Journal.

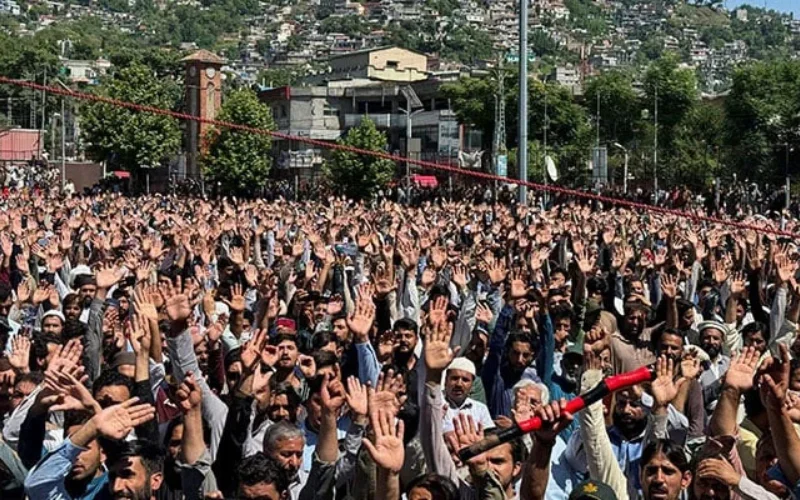

The upcoming system targets inference computing, a pivotal technology that enables AI models to deliver instantaneous, context-aware responses to complex queries. The platform is slated for a high-profile reveal at Nvidia’s GTC developer conference in San Jose next month, signaling a major milestone in AI infrastructure evolution.

Insiders indicate that the system will leverage proprietary technology from AI chip innovator Groq, reflecting a strategic alliance designed to minimize latency, maximize throughput, and optimize computational efficiency.

Reuters has not independently verified the report, and neither Nvidia nor OpenAI responded immediately to inquiries.

Earlier this month, OpenAI reportedly flagged concerns over the response velocity of Nvidia’s existing hardware in processing compute-intensive tasks, such as advanced software development and AI-to-AI communication protocols.

According to one source, the company is seeking next-generation hardware capable of meeting approximately 10% of its inference computing demand, a significant benchmark for large-scale AI deployment.

OpenAI has reportedly explored collaborations with emerging AI chipmakers, including Cerebras and Groq, to accelerate inference processing. However, Nvidia’s $20 billion licensing deal with Groq reportedly precluded OpenAI from finalizing its own agreement with the startup.

In September, Nvidia disclosed plans to inject up to $100 billion into OpenAI, establishing a strategic partnership that grants the chipmaker an equity stake while providing the AI firm with capital to scale advanced computing infrastructure.

This development underscores the intensifying arms race in AI hardware, as leading players vie to deliver ultra-low-latency, high-throughput platforms capable of sustaining the exponential growth of generative AI technologies and next-generation machine learning workloads.